Key Takeaways:

- This article provides guidance in navigating existing frameworks and introduces how to get started in AI governance. We developed a process with actionable steps and specific documents you can request here to jump-start your strategy.

- AI governance is a system of rules, processes, frameworks, and tools within an organization to ensure the ethical and responsible development of AI.

- It is crucial to implement AI governance because of upcoming and existing regulations, PR, and societal risks. Additionally, it leads to better and more efficient development.

- Many frameworks are trying to define AI governance in theory and give practical implementation ideas. This article covers 5 frameworks by the IEEE, EU, Montreal, AIGA and NIST.

- AI governance is interdisciplinary and needs to be rooted in the AI Team, Development Team, Legal Team, Domain Experts / Users, and Customer Success Team.

As AI systems continue to become more prevalent and integral to various industries, concerns surrounding data privacy, algorithmic biases, and the impact of AI on decision-making processes have also been growing. In addition to the Gartner survey, which found that 41% of organizations in the U.S., U.K., and Germany have experienced an AI privacy breach or security incident, there have been numerous news articles and reports about the potential risks and negative effects of AI implementation. For example, the use of AI in hiring practices has been criticized for perpetuating biases and discrimination, while the use of AI in criminal justice systems has been found to be inaccurate and unfair in certain cases. As such, it is important for organizations to not only prioritize the ethical and responsible use of AI, but also to actively address and mitigate any potential risks and negative consequences that may arise.

AI governance is the key to enabling trustworthy, responsible, and efficient AI systems. Establishing governance frameworks as soon as organizations implement AI is crucial to ensure its development and use align with ethical and societal standards. In this post, we will explore what AI governance is, why it is important, and the frameworks that have been developed to guide its implementation. We will also discuss the process of putting these frameworks into practice and introduce a practice-tested framework for AI governance.

What is AI Governance and why should I care about it?

The central function of AI governance is to ensure the ethical and responsible development and use of AI. AI Governance is a system of rules, processes, frameworks, and technological tools that are employed in an organization to ensure that the use of AI aligns with the organizational principles, legal requirements, as well as social and ethical standards. It is part of the organization’s governance landscape and intertwines with IT governance, data governance and general governance.

AI governance enables organizations to unleash the full potential of AI, while mitigating risks like bias, discrimination, and privacy violations. The regulatory landscape keeps evolving quickly, with the upcoming EU AI Act, US AI Bill of Rights, and Chinese AI Regulation, which will make AI governance systems obligatory.

The regulation will cause organizational compliance overhead and huge fees if companies fail to prepare accordingly. This is one reason companies should set up an AI governance process today and ensure developed AI systems exceed regulation. Apart from that, AI governance not only makes AI fair and trustworthy but also increases the adoption of AI and improves business outcomes. The structure of the process enhances transparency, understanding, and resource allocation, which increases the efficiency of AI development.

Gartner expects that by 2026 organizations that operationalize AI transparency, trust, and security will see their AI models achieve a 50% result improvement in adoption, business goals, and user acceptance.

Start today and make AI governance your competitive advantage. Learn below how.

AI Governance Frameworks

Ethical principles like fairness must be translated into processes and tasks to make them actionable.

Even though standards for AI are mostly non-binding, several governance frameworks have been published to guide the development and use of AI. Some of the most prominent frameworks include:

- The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems

- The European Union’s Ethics Guidelines for Trustworthy AI as base for the EU AI Act

- The Montreal Declaration for Responsible AI

- The AIGA AI Governance Framework

- NIST Artificial Intelligence Risk Management Framework

In the following, we give a rough overview of the core principles and distinguishing factors of these frameworks, all summarized again in the table below. If you are mainly interested in deriving actionable tips on how to set up your organization's governance process, feel free to skip to the next section!

The IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems

The framework is a consortium of different standards, including specific documents, e.g. regarding system design, certification, and bias. This framework generally consists of eight principles: transparency, accountability, awareness of limitations, safety and well-being, reliability and dependability, equity, inclusivity, and privacy protection.

In addition to the eight principles, it also includes a set of metrics to assess the extent to which AI systems adhere to these principles. These metrics are designed to provide a standardized way of evaluating the ethical and responsible use of AI across different industries and applications. The metrics consider factors such as the level of transparency of the AI system, the degree to which the system is accountable to humans, and the measures in place to protect user privacy.

The European Union’s Ethics Guidelines for Trustworthy AI

In 2019, a High-level Expert Group (HLEG) developed guidelines on trustworthy AI that acted as a basis for the policy recommendations in preparation for the EU AI Act. They define trustworthy AI to be lawful, ethical, and robust.

The ethics guideline itself is based on seven key requirements that mostly overlap with the eight principles of the IEEE. It additionally introduces the concept of human oversight and enlarges the well-being requirement from societal to environmental.

The EU provides an Assessment List for Trustworthy Artificial Intelligence (ALTAI) online to support AI developers and deployers in developing Trustworthy AI. This list was also translated into a web-tool, accessible here.

The Montreal Declaration of Responsible AI

Similar to the two frameworks above, the Montreal Declaration for Responsible AI is also based on 10 principles. It largely covers the same areas, includes ecological responsibility as the EU, and additionally emphasizes democratic participation, respect for autonomy, as well as prudence during development.

These principles have resulted in 8 recommendations that provide guidelines for achieving the digital transition within the ethical framework of the declaration, like implementing audits and certifications, independent controlling organizations, ethics education for developing stakeholders, and empowerment of the user.

The AIGA AI Governance Framework

The framework developed by the University of Turku focuses on how to put ethical AI into practice and has a high focus on practical recommendations. It is aimed at supporting compliance with the upcoming EU AI Act.

Similarly to the other frameworks, it consists of 7 core principles: responsibility, transparency, explainability, accuracy and fairness, privacy and security, human control of technology, and professional responsibility. The differentiator is that those principles are translated into tasks along the AI lifecycle, focused on the AI system as a whole and the algorithms and data used. The goal is to give clear steps for using AI, from planning the design to monitoring the deployed systems.

NIST Artificial Intelligence Risk Management Framework

The U.S. Department of Commerce’s National Institute of Standards and Technology (NIST) has published this framework to help organizations manage the risks of AI. Like the AIGA, it aims to provide a flexible, structured, and measurable process to translate governance into practice.

It states four core functions, with governance being a cross-cutting function to the other three functions: map, measure, and manage AI risks. Each of these high-level functions is broken down into categories and subcategories containing specific actions and outcomes.

Even though it gives a deep understanding of actionable tasks, it doesn’t propose an ordered set of steps or a checklist of questions.

Putting Frameworks into Action

While governance frameworks guide ethical and responsible AI development and use, they must be put into practice to be effective. Implementing governance frameworks requires a coordinated effort across multiple domains within the organizations. It is essential to assign responsible stakeholders in each domain to foster exchange between technical teams (AI development team and product team), project management, domain specialists (e.g., involved business units and sales), governance / legal, and customer success.

All above summarized frameworks share similar core principles to define the large field of AI governance. NIST and AIGA try to offer more detailed tasks to be integrated into AI development. Yet, they all lack clear guidance on implementing a safe and feasible government process for organizations.

This is why we aim to provide you with ideas on how to get started in setting up your organization’s AI governance process.

The key to success is to prioritize values-driven, ethically aligned design at the beginning of the development process. By transforming governance from a burden to an asset, organizations have the potential to collectively maximize their innovations and make AI development responsible & efficient at the same time.

Setting up your AI Governance Process

There is no one-size-fits-all solution for AI governance, as every company is unique in its risk preferences and processes, just like every AI use case varies. This guideline should help you get started with your own AI governance - we are happy to help!

- Appoint an AI governance lead (if you are a small company, this doesn’t need to be a full-time role but can be taken over by one of the involved parties as an additional responsibility). This lead is in charge of overseeing the implementation, as well as the maintenance of the AI governance process, and oversees deliverables and manual tasks.

- Choose your governance framework of orientation. The AIGA gives a good orientation for actionable tasks, with EU AI Act compliance already integrated as far as possible.

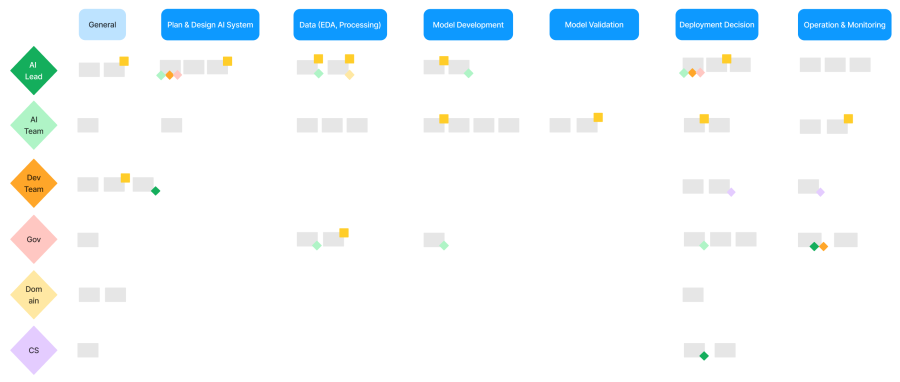

- Map your company’s AI lifecycle on a horizontal axis and add all relevant stakeholders on a vertical axis to be able to allocate individual governance tasks. See the image below for an example.

- Draw up all relevant tasks and allocate them to the fitting lifecycle stage and the right stakeholder.

- Fill the tasks with life. Set up the relevant documents and tools. Unfortunately, the frameworks don’t specify the content of needed documentation, for example, a project charter or development documentation. Nor do they offer further information on tools, e.g., SHAP for AI explainability. This means a lot of work and uncertainty.

→ To make your life easier in the jungle of tasks and stakeholders, we came up with our own sample process of lifecycle and stakeholder-specific tasks, including relevant documents and tools. Request a free copy here. - Define the regularity of health checks to re-assess all AI systems after initial deployment.

- Set up a central place for all documents, e.g., in your wiki or cloud storage.

- Onboard all affected stakeholders and ensure they implement the tasks into their workflow.

Getting started on AI governance is necessary to stay competitive, but it can be overwhelming - especially with the range of frameworks and tools out there.

Conclusion

AI governance is essential to ensure the ethical and responsible development and use of AI. Governance frameworks provide guidance but are not actionable enough. To be effective, these frameworks must be put into practice through a coordinated, multistep effort across several domains. We are looking forward to further updates on the legislation and the progress of governance frameworks and will keep you posted.

It is crucial for companies to implement a structured AI governance process to prepare for upcoming regulation and stay competitive. A good governance process brings structure, assists with reporting, and increases transparency, which not only makes AI development more responsible but also more efficient.

Supplementary free resources with a detailed governance process can be found here.

If there are any questions regarding this topic or article, please reach out to hello@trail-ml.com.

*** Originally published at https://www.trail-ml.com ***

- Oznake

- AI Governance Trustworthy AI Guidelines Trustworthy AI EU AI Act Montreal Declaration NIST ieee AIGA