The EU has proposed the EU AI Act to ensure trustworthy and ethical AI across Europe. It introduces a range of requirements and obligations for companies providing or using AI, especially in so-called “high-risk areas”. Find out if your AI system qualifies as high-risk and what that means for you below!

Key Takeaways:

- Companies that fail to comply with the AI Act may face significant penalties, including fines of up to 7% of the company’s annual global turnover or €35 million (violations regarding prohibited systems), whichever is higher.

- If your AI system qualifies as high-risk, you must meet various requirements during development and post-market.

- Key obligations for high-risk providers include setting up an extensive quality management system, keeping logs, preparing detailed technical reports that act as a basis for audits, undergoing a conformity assessment, and implementing human oversight, among others.

- We are happy to get in touch about helping you set up the right tools and processes to increase AI transparency and documentation quality in your organization. Find more information here.

What is the EU AI Act?

The EU AI Act is a legislative proposal introduced on the 21st of April 2021 by the European Commission, and reached provisional agreement among EU co-legislators on the 8th of December 2023. It aims to regulate AI across the EU to make it trustworthy and ethical. The Act introduces a risk-based approach, specifying four different levels of risk: unacceptable risk, high risk, limited risk, and minimal risk. The compliance obligations for companies vary according to these categories, with companies in the high-risk category having the highest obligations to fulfill.

Does my AI classify as high-risk?

The EU defines high-risk AI systems as those that have the potential to cause significant harm to EU citizens or the environment. Examples of high-risk AI systems include those used in critical infrastructure, transportation, and healthcare. The Act also includes AI systems that are used to make decisions that have legal or similarly significant effects, such as credit scoring or hiring decisions.

Consult this article to find out whether your system classifies as high-risk. Or see Annex II and III of the EU AI Act which provide an extensive list of AI systems that classify as high-risk.

Be cautious: failing to comply can cost you millions!

Companies that fail to comply with the AI Act and its extensive measures for high-risk AI Systems may face significant penalties. The Act introduces fines of up to 7% of the company’s annual global annual turnover or €35 million, whichever is higher, for violations on the prohibited systems. All other violations can receive fines of up to €15 million or 3% of the global turnover. Companies may also face reputational damage and legal action from affected individuals.

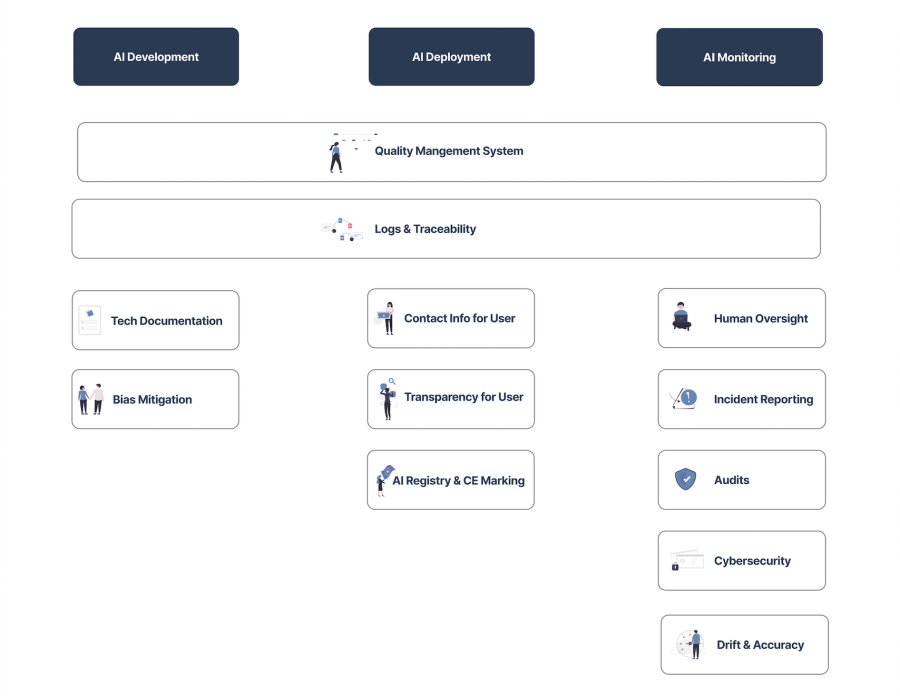

This is what’s coming: The 10 Requirements & Obligations for High-Risk Systems

Title III, Chapters 2 and 3 of the current draft of the EU AI Act establish requirements and obligations for providers of high-risk systems. We summarized them below to give a better overview of what lies ahead for companies in the high-risk sectors. The AI Act is currently being criticized for the missing specification of how to implement those requirements, which still gives room for change.

The EU AI Act currently attaches a single set of generic requirements to all high-risk AI systems, but the requirements should be adapted to different applications to minimize overhead and ensure the appropriateness of measures.

1. Set up a Quality Management System that includes the following:

- Risk management system as a continuous & iterative process throughout the entire lifecycle to evaluate and mitigate all possible risks and ensure adequate design & development of the AI system.

- Data management system to enable data governance. Split and document data in training, testing, and validation data sets. Formulate relevant assumptions before assessing the data sets and document quality and distribution, including bias detection tests.

- Post-market monitoring processes & reporting of incidents.

- Test processes for the AI system throughout the development process and before placing the system on the market. Define suitable test metrics and thresholds before starting development.

- Accountability framework with responsibilities.

- Reporting proportionate to the size of the organization.

2. Conduct a fundamental rights impact assessment considering aspects such as the potential negative impact on marginalized groups and the environment. [Latest addition to high-risk obligations after the trilogue on 8th of December 2023]

3. Provide contact information for users / other stakeholders.

4. Keep logs over the duration of the system’s life cycle to enable traceability and monitoring. The logs must include the time period of system usage, input data, and people involved in verifying results.

5. Implement transparency measures like providing user instructions for the system, information about characteristics, capabilities, and limitations of system performance, as well as output interpretation tools and a description of mechanisms included within the system.

6. Keep relevant documentation for ten years. That includes:

- Detailed technical documentation (= audit documentation, according to Annex IV). The content may be streamlined for start-ups and SMEs in the future to decrease the administrative burden).

- Quality management system documentation

- Legislative interaction documentation

- EU declaration of conformity (→ see Annex V)

7. Register your AI system and undergo conformity assessment to obtain the declaration of conformity and according CE marking. This is also necessary for non-European providers placing AI on the EU market, which also implies full compliance to all other requirements. Ensure necessary mitigation actions in case of non-conformity and inform the relevant authority.

8. Demonstrate conformity upon request in an audit. This includes detailed technical documentation and the latest logs of the AI system’s performance.

9. Implement human oversight during AI system use to understand capacities and limitations, to interpret outputs correctly, or to intervene in the system.

10. Implement cybersecurity measures to prevent attacks, ensure system robustness, and prevent failures. Uphold model accuracy and ensure that AI systems that continue to learn are designed to avoid biased outputs that could influence future operations.

How can I start preparing already today?

Complying with the EU AI Act can sound daunting for high-risk companies.

We suggest starting to set up governance processes already today. Organizations utilizing high-risk applications will likely need to comply with the AIA in early or mid 2025. Risk management, logging, and a comprehensive documentation make sure that everything you develop today can still be used once the regulation is here. Additionally, they make your organization more transparent which increases efficiency. We will update the article as soon as the AI Act is finalized.

Minimize technical, reputational, and societal risks of high-risk AI systems and increase understanding within your organization and among your customers.

If there are any questions regarding this topic or article, please reach out to hello@trail-ml.com.

*** Originally published at https://www.trail-ml.com ***

- Log in to post comments

- Tags

- EU AI Act AI Governance High-Risk AI Systems EU AIA Obligations AI Transparency AI Documentation